Magdeburg Lectures on Optimization and Control: Bundle Methods -- A Flexible Tool in Convex Relaxation and Optimization

Magdeburg Lectures on Optimization and Control: Bundle Methods -- A Flexible Tool in Convex Relaxation and Optimization

- Datum: 24.10.2017

- Uhrzeit: 17:00 - 19:00

- Vortragende(r): Prof. Dr. Christoph Helmberg, TU Chemnitz

- Ort: Festung Mark (oberes Gewölbe)

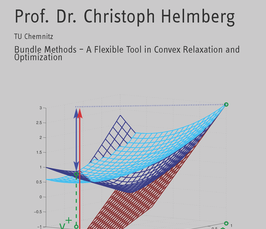

Bundle Methods -- A Flexible Tool in Convex Relaxation and Optimization

Solution approaches for large scale integer or stochastic optimization problems frequently employ Lagrangian relaxation or decomposition in order to break the problem into manageable pieces. Suitable multipliers are then determined by nonsmooth convex minimization algorithms. In this, subgradient algorithms are frequently employed because they are easy to implement and they have optimal convergence properties for first order oracles. Bundle methods try to make better use of the same information by collecting it over time. While their convergence properties are hard to pin down, the choice of the cutting model and proximal term offers a lot of flexibility to adapt the method to ones needs. The choice of the proximal term allows to introduce scaling information. When dealing with sums of convex functions bundle methods open new possibilities for asynchronous parallel optimization approaches. In semidefinite optimization a specialize d cutting and scaling model allows to move from first order towards second order behavior. In Lagrangian relaxation the generation of approximate primal solutions admits primal cutting plane approaches. Based on examples from scheduling, train timetabling and graph partitioning we illustrate a selection of these aspects, highlight some of the theory involved and discuss a few implementational issues arising in the callable library ConicBundle.